This defines the euclidean distance between two points in one, two, three or higher-dimensional space where n is the number of dimensions and x_k and y_k are components of x and y respectively. Euclidean Metricĭo you remember Pythagoras Theorem? Pythagoras Theorem is used to calculate the distance between two points as indicated in the figure below.

We will specifically discuss two important similarity metric namely euclidean and cosine along with the coding example to deal with Wikipedia articles. Similarities are usually positive ranging between 0 (No Similarity) and 1 (Complete Similarity). Another important use case would be to segment different customers for marketing campaigns using the K Means Clustering algorithm which also uses similarity measures. One important use case here for the business would be to match resumes with the Job Description saving a considerable amount of time for the recruiter. We can use these measures in the applications involving Computer vision and Natural Language Processing, for example, to find and map similar documents. Many real-world applications make use of similarity measures to see how two objects are related together.

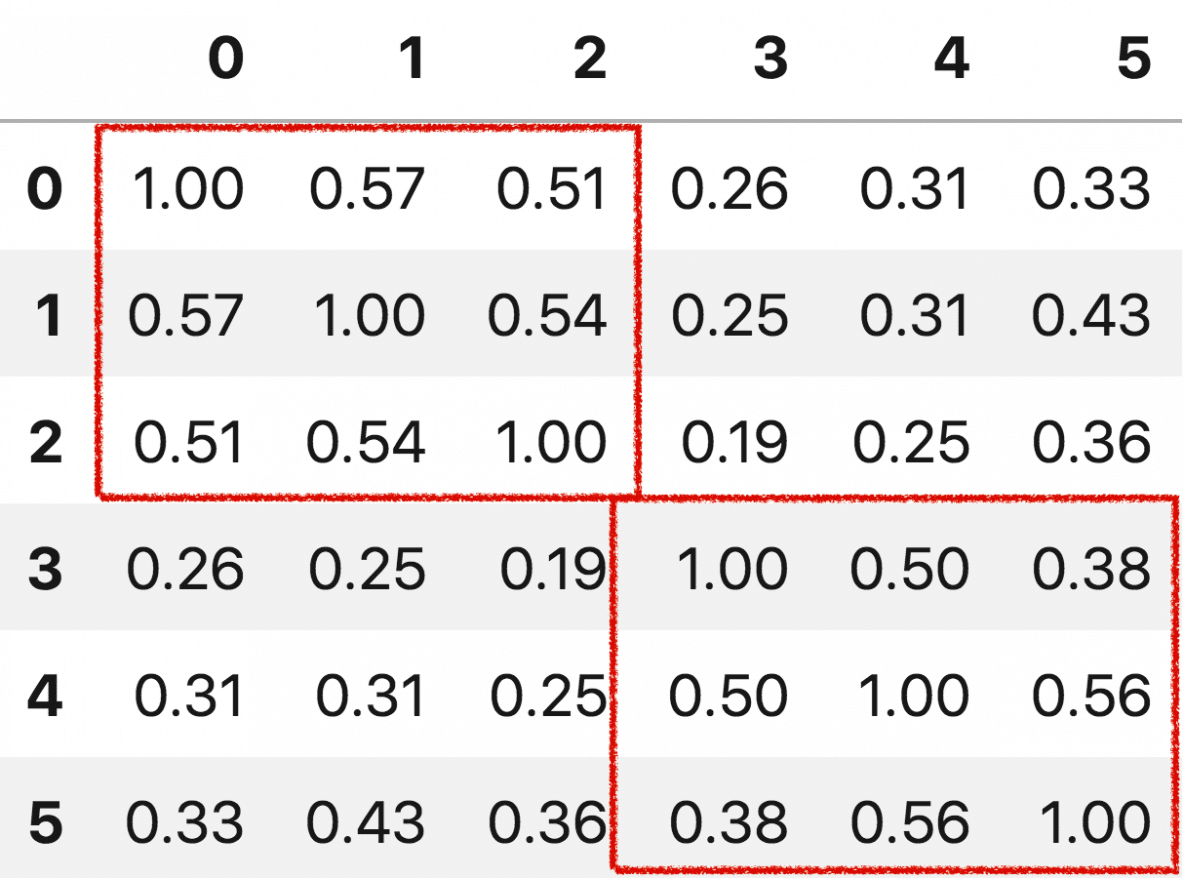

This helps you to find relevant information about documents.Similarity Measures - Scoring Textual Articles Suppose you have a large number of documents then using the information of cosine similariy you can cluster documents. If the value is 1 or close to 1 then both documents are the same and if it is close to -1 then documents are not the same. The best way to check whether both documents are similar or not is to find the cosine similarity between each document. If you have two documents and want to find the similarity between them you have to find the cosine angle between the two vectors to check similariy. It calculates the similarity between two vectors. What is cosine similarity?Ĭosine similarity is used in information retrieval and text mining. Still, if you found, any information gaps. I hope this article, must have cleared implementation. We can also implement this without sklearn module. Irrespective of the size, This similarity measurement tool works fine. Conclusion –Ĭosine similarity is one of the best ways to judge or measure the similarity between documents. We can use TF-IDF, Count vectorizer, FastText or bert etc for embedding generation. In Actually scenario, We use text embedding as NumPy vectors. Which signifies that it is not very similar and not very different. After applying this function, We got a cosine similarity of around 0.45227. Print(cosine_similarity(array_vec_1, array_vec_2))

Here it is- from import cosine_similarityĪrray_vec_1 = np.array(])Īrray_vec_2 = np.array(]) Let’s put the code from each step together. cosine_similarity(array_vec_1, array_vec_2) Complete code with output. But in the place of that, if it is 1, It will be completely similar. If it is 0 then both vectors are completely different. It will calculate the cosine similarity between these two. array_vec_1 = np.array(])Īrray_vec_2 = np.array(]) Step 3: Cosine Similarity-įinally, Once we have vectors, We can call cosine_similarity() by passing both vectors. Secondly, In order to demonstrate the cosine similarity function, we need vectors. Import numpy as np Step 2: Vector Creation – Here will also import NumPy module for array creation. Step 1: Importing package –įirstly, In this step, We will import cosine_similarity module from package. We will implement this function in various small steps. The formulae for finding the cosine similarity is the below. Now how you will compare both the documents or find similarities between them? Cosine Similarity is a metric that allows you to measure the similarity of the documents. Suppose you have two documents of different sizes. In this article, We will implement cosine similarity step by step. It will calculate the cosine similarity between two NumPy arrays. We can import sklearn cosine similarity function from.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed